The Agentic Era

The Shift From Cheaper Thinking to Cheaper Doing

On a Friday evening in San Francisco, a software engineer types a few sentences into a terminal, closes their laptop, and goes home. While they sleep, three AI agents work through the weekend: one writes the code, another reviews it, a third runs tests and files pull requests. By Monday morning, a feature that would have taken a team two weeks is sitting in their inbox, waiting for a final human read-through.

This isn’t a thought experiment. It’s how a growing number of developers are now working, and it points to something larger than another wave of productivity software. For the past two years, the conversation around AI has been about cheaper cognition — better answers, faster summaries, smarter chatbots. The shift happening now, under the banner of “AI agents,” is categorically different. It’s about cheaper execution. And once execution gets cheap, the structure of knowledge work — and eventually a lot more than knowledge work — starts to change.

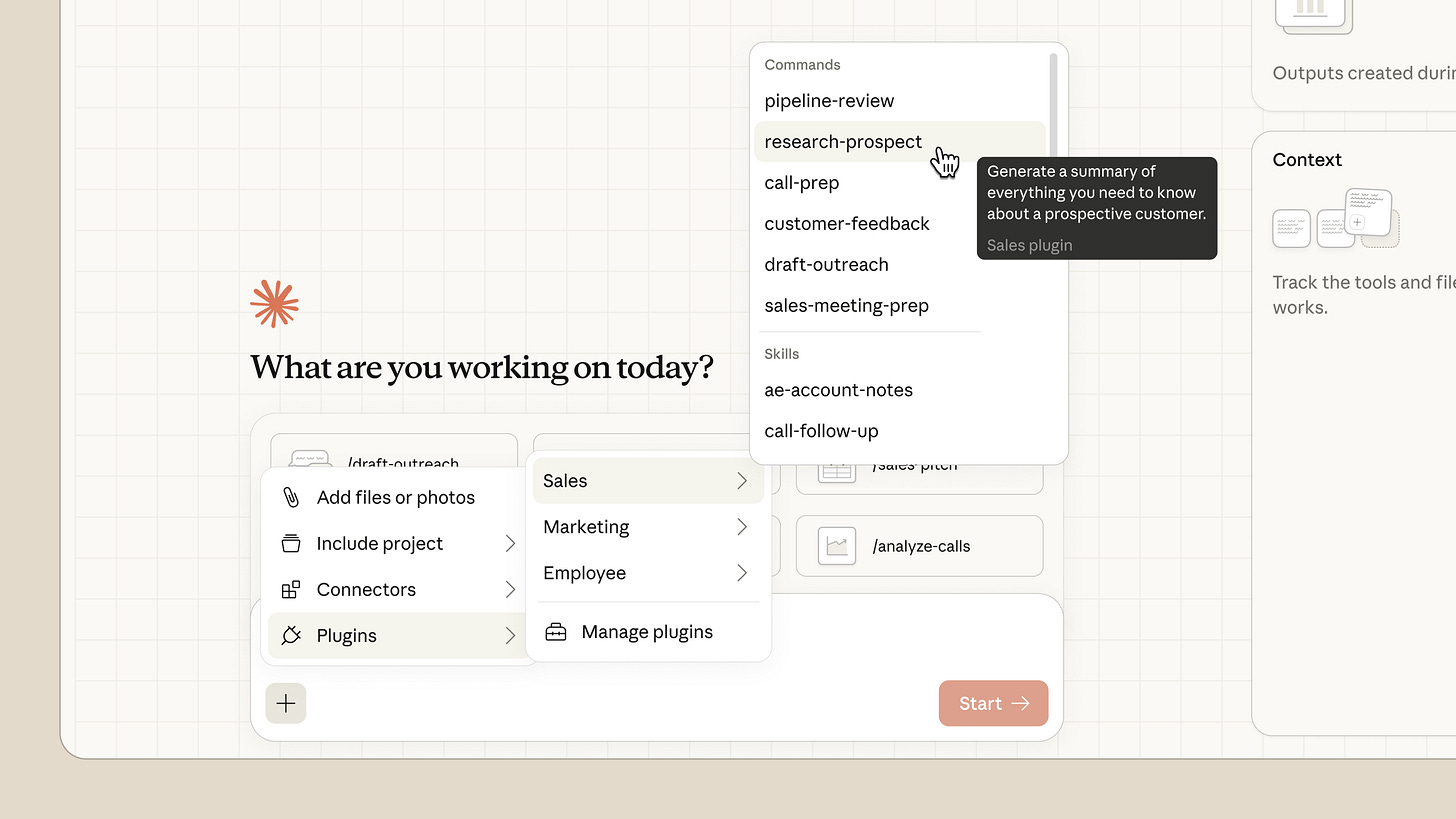

The word “agent” has been stretched in every direction over the past year, so it’s worth pinning down. An AI agent is a system that uses a large language model as its reasoning engine to interact with the world on its own. A chatbot is response-oriented: you ask, it answers, the loop closes. An agent is results-oriented: you assign a goal, and it plans, acts, observes what happened, and iterates until the goal is met or it gets stuck.

The mechanism is something called a ReAct loop — reason, then act, then reason again on what the action revealed. Imagine telling an agent: “Book me a flight to New York for under $400.” The agent reasons through the steps it needs to take, calls a flight search API, and finds the cheapest option is $450. Instead of giving up, it reasons again: maybe a different week is cheaper. It searches again, finds a $380 fare two months out, and comes back to you for confirmation. The loop is what makes it feel less like software and more like a junior employee.

Anthropic’s Cowork, which can operate files on your computer the way a person would, are early consumer-facing examples. The more interesting versions are happening inside companies, where agents are being wired into internal systems to do work that previously required a human to sit at a keyboard.

This is the line that matters. Previous AI compressed the cost of thinking. Agents compress the cost of doing. ChatGPT could write you a memo. It could not send the memo, file it in the right folder, update the CRM, and tell three colleagues it was done. Each of those small acts of execution still required a human, which meant the bottleneck in most organizations wasn’t intelligence, it was the sheer number of hands needed to translate intelligence into outcomes. Agents collapse that bottleneck. They don’t just generate the artifact; they move it through the world. When the cost of execution falls toward zero, you don’t need as many people whose primary job is execution.

I work in strategy at a bank, so this is the industry I see most clearly, and the picture is striking once you start looking at how work actually flows through the organization. In fraud, investigators spend the bulk of their time reviewing flagged cases — pulling transaction histories, cross-referencing device data, checking the customer’s recent contact with the bank, looking for the tell-tale patterns of account takeover or first-party fraud. A human investigator might get through a few dozen cases a day. An agent working alongside them, or eventually in their place for the more routine tiers, can triage hundreds, escalate the genuinely ambiguous ones, and let the human focus where judgment actually matters.

Customer service in commercial and personal banking is a more visible example. Today’s setup in most banks is what I’d call the chatbot-assisted human: a service rep gets an inquiry, uses an internal chatbot to surface the customer’s profile and recent interactions, drafts a response, logs the resolution in the relevant system, and sometimes schedules a follow-up. The chatbot speeds up each individual step, but the human is still the connective tissue between them. The work is faster, but it’s still bottlenecked on a person doing it.

An agent collapses that whole sequence into a single end-to-end action. It reads the inquiry, retrieves the profile, interprets the request, takes the action — refund, dispute, account update — logs the outcome, and follows up on its own. The human stops being the connective tissue and starts being the exception handler: the person who steps in only when the agent flags something it isn’t confident about. That’s the difference between augmenting a workflow and removing the human from the loop.

The same logic shows up on the deal side in capital markets. A junior analyst’s week is dominated by recurring tasks — pulling comparable company data, building three-statement models and formatting pitch decks. An agent built on top of the firm’s data infrastructure can pull the comps, populate the operating case from filings, lay in a debt schedule, run sensitivities, and draft the memo with assumptions footnoted. Tools like Rogo are explicitly building this for finance, and Anthropic’s own finance offerings point in the same direction.

What ties these examples together is that they’re not all the same kind of work. Fraud investigation, customer service, and deal modeling sit in completely different parts of the bank, with different systems, different risk profiles, and different cultures. But the underlying shape of the work is the same, with humans acting as the connective tissue between a set of information systems and a set of decisions. Agents are very good at being connective tissue.

What this means for hiring is that you need fewer humans whose primary value is workflow execution, and the ones you keep are doing different work. Less data assembly, more judgment. Less log-and-route, more handling the cases the agent escalated. Meta has been signaling something similar with its push toward flatter organizations — fewer middle managers, more direct lines from idea to output.

The piece of all this that I find most genuinely new is the part that has nothing to do with replacement and everything to do with leverage, that agents work when you don’t. The Friday-night-to-Monday-morning workflow I opened with is the early version of what can be referred to as “the dark factory of knowledge work” — a reference to the lights-out manufacturing facilities in China that run continuously without human workers on the floor. For a small team, this is transformative. A two-person startup with a stable of agents has the throughput of a ten-person team that only works business hours. For a researcher, it means launching a dozen literature reviews on Friday and walking in Monday to a synthesized landscape of every relevant paper.

The implications for fields like drug discovery, materials science, and clinical research are larger than the implications for any given knowledge job. The rate-limiting step in a lot of science isn’t ideas — it’s the patient, expensive labor of running experiments, analyzing results, and deciding what to try next. If agents can compress that loop from weeks to hours, the pace of discovery itself changes.

Most commentary on AI agents stops at knowledge work, but that’s a failure of imagination. Agents are the software brain that physical robots have been waiting for. The bet behind Figure, Boston Dynamics, Tesla’s Optimus, and the broader humanoid robotics push is that the limiting factor in robotics was never the hardware. It was the reasoning layer, the ability to take a vague instruction (”clean up the kitchen”) and decompose it into a sequence of physical actions, adapt when something goes wrong, and recover when a glass falls off the counter. That’s exactly what an agent does, except the API calls are to motors instead of databases. If the LLM-as-reasoning-engine pattern holds up in physical environments, and the early demos suggest it does, then the same dynamic that’s reshaping the analyst bullpen will eventually reach the warehouse, the construction site, and the back of a restaurant. The timeline is longer, the capital costs are higher, and the failure modes are more dangerous. But the underlying logic is the same: cheaper execution, applied to a different substrate.

A reasonable reader reading this far may push back on my argument, and they should. Today’s agents are unreliable on tasks that take longer than about thirty minutes. They hallucinate citations, miss obvious context, and fail in ways no junior analyst would. Demos are cherry-picked. Most enterprise pilots are quietly underwhelming. The internet is full of people declaring AI capabilities that don’t survive contact with a real workflow. All of this is true, and none of it is the right question. For anyone planning a workforce three to five years out, the key consideration is whether the reliability curve is bending fast enough that the work an analyst does in 2027 looks meaningfully different from the work an analyst does in 2025. On the evidence — model capability gains, the rate of tooling improvement, the volume of capital flowing into agent infrastructure — the answer is almost certainly yes.

I’d also push back on the fear surrounding AI resulting in job-loss. A lot of what’s currently being blamed on AI is, in my read, the cleanup from pandemic-era overhiring. Companies bloated through 2021 and 2022, and “AI efficiency” is a more flattering reason to lay people off than “we hired too many.” The evidence that the most aggressive AI replacement story is overstated is sitting in plain sight: India’s services economy — the canonical example of work that AI agents should hollow out first — is still growing. If agents were the existential threat the headlines suggest, that wouldn’t be the case yet.

If the trajectory holds, the bank of 2030 has roughly half the analyst headcount of the bank of 2024 and produces twice the deliverables. The associate role, as it exists today, is partially automated and partially elevated — the people in those seats spend less time building models and more time pressure-testing the agent’s models, talking to clients, and making judgment calls that an agent can’t underwrite.

A more interesting question to consider is If the bottom rung of the ladder is automated away, where do the future executives come from? How do you train judgment in people who never had to build the model themselves? That’s a real problem, and it’s not one the agent vendors are going to solve.