The Ghost in the Machine

What the strange history of the ATM tells us about whether AI will complement our work or render it obsolete

There is a story about technology and employment that, once you hear it, lodges itself permanently in the mind. It is a story about the automated teller machine — the ATM — and the curious fact that a device literally named after the job it was supposed to eliminate did not, in fact, eliminate that job.

The first ATM in the United States was installed at a branch of Chemical Bank on Long Island in 1969. Growth was slow through the nineteen-seventies, but by 1990 there were roughly a hundred thousand machines scattered across the country. By the turn of the millennium, the number had surged past four hundred thousand. In 1975, there were about thirty-one ATMs per million Americans; by 2000, that figure had grown to 1,135 — a thirty-seven-fold increase in just twenty-five years. The economics were irresistible: ATMs carried a large upfront installation cost but operated at a fraction of the ongoing expense of a human employee. If any technology was destined to destroy a category of work, this was it.

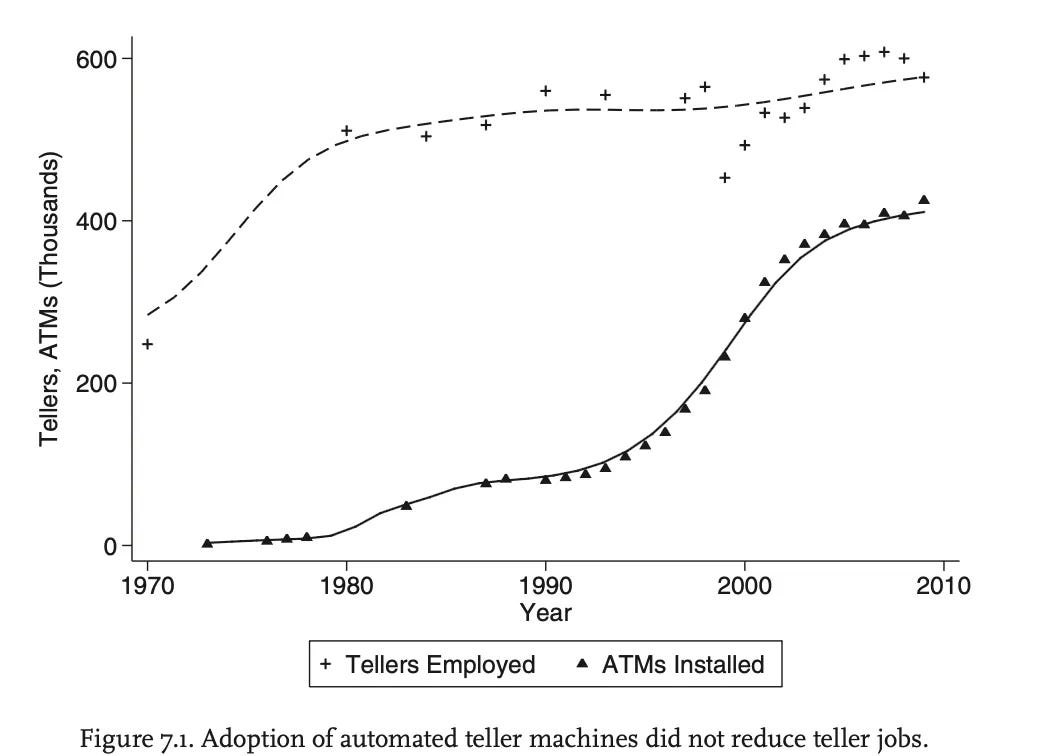

And yet the destruction never came. As the economist James Bessen of Boston University documented in his influential research, the number of full-time bank tellers in the United States actually grew alongside the ATM boom — from roughly three hundred thousand in 1970 to over six hundred thousand by the early 2000s. What happened was more subtle than simple replacement. ATMs reduced the number of tellers needed to run a single branch — from about twenty to thirteen between 1988 and 2004 — but they also reduced the cost of opening a branch in the first place. Banks, competing furiously for market share, responded by opening more locations. Urban bank branches increased forty-three percent. Fewer tellers per branch, but far more branches in total. The math worked out in the tellers’ favor.

More important than the arithmetic was the transformation in what tellers actually did. Freed from the low-value drudgery of counting cash and processing checks, they migrated toward relationship banking — advising customers on mortgages, selling financial products, handling the messy, human problems that no machine could resolve. The job changed. Employment didn’t decline. Banks even started hiring more college graduates into teller roles, and there is evidence that wages edged upward. The ATM, in short, was complementary: it automated the routine, elevated the complex, and expanded the overall market for human labor.

This is the version of the story that technologists and policymakers have cited for years as reassurance against automation anxiety. It was the version that J. D. Vance, now Vice President, invoked during his 2024 debate with Tim Walz. It is a good story, and it contains real insight about the difference between automating tasks and eliminating jobs. But it also has a second act that most people leave out.

Beginning around 2010, the number of bank tellers in the United States entered a steep, sustained decline. By 2016, full-time teller employment had fallen to roughly 235,000 — down from 332,000 just six years earlier. By 2022, the figure had cratered to about 164,000. The Bureau of Labor Statistics now projects a further thirteen-percent decline through 2034. This was not a delayed ATM shock; the ATM had reached saturation long before. It was the effect of something else entirely — something that had nothing to do with banking.

It was the iPhone.

Apple introduced the smartphone in 2007. Within a few years, mobile banking applications had rendered the physical branch visit optional for a growing majority of customers. Bank branches in the United States peaked at approximately 99,550 in 2009 and have fallen by more than twenty-one thousand since — a decline of roughly twenty-two percent. When people stopped going to branches, banks stopped needing branches. When branches closed, tellers became redundant. The ATM had automated what tellers did. The iPhone automated the reason people visited tellers. The first technology created a new equilibrium. The second collapsed it.

The distinction is important because it maps onto two fundamentally different theories of how new technologies reshape labor markets. The ATM was task automation — the substitution of a machine for a specific, bounded activity within a broader workflow. Task automation tends to be complementary: it removes the routine, increases productivity, and often expands total employment by reducing costs and growing the addressable market. The iPhone, by contrast, enabled something closer to paradigm automation — the construction of an entirely new infrastructure that bypassed the old workflow altogether. Digital banking did not improve the experience of visiting a branch. It eliminated the need to visit one.

That new infrastructure, it is worth remembering, took decades to assemble. It required fiber-optic networks for connectivity, semiconductor advances for processing power, the invention of the personal computer, the development of the smartphone, and the creation of mobile application platforms and digital payment networks. PayPal, the fintech pioneer, started out literally trying to solve the problem of sending money via email. Amazon, in its earliest days as an e-commerce platform, accepted customer payments by mail-in check. The paradigm shift that ultimately rendered bank tellers obsolete was not a single breakthrough but the slow, compounding accumulation of an entirely new technological substrate — one whose implications took a generation to fully materialize.

This brings us, inevitably, to the question everyone in business, government, and the labor force is now asking: is artificial intelligence the ATM, or is it the iPhone?

Had you asked me this question in early 2023, when ChatGPT had just exploded into public consciousness, my answer would have leaned firmly toward complementary. At that stage, the large language models were useful assistants — capable of drafting a rough memo, debugging a Python script, or summarizing a dense research paper, but fundamentally dependent on a human operator to direct them, evaluate their output, and integrate the results into a broader workflow. They were, in the language of the ATM analogy, automating tasks within existing jobs. They were making people faster, not making people unnecessary.

Two years later, that assessment requires revision.

The change has been driven not just by improvements in raw model capability — though those improvements have been dramatic — but by a qualitative shift in how AI systems are deployed. The era of the chatbot, in which a human typed a question and received an answer, is giving way to the era of the agent, in which AI systems autonomously plan, execute, and complete multi-step workflows with minimal human oversight.

Consider the trajectory. In 2023, using an AI model productively required sustained, skilled interaction — prompt engineering, iterative refinement, careful verification. By late 2025, agentic AI platforms had emerged that could pull files, read documents, write and execute code, communicate across tools, and deliver finished outputs while their human operators stepped away from the keyboard. The shift from “AI as research assistant” to “AI as autonomous worker” is the difference between a calculator and a self-driving car. One augments a human capability; the other performs the function end to end.

The velocity of this transition is visible in the startup ecosystem. At Y Combinator’s Winter 2025 Demo Day, CEO Garry Tan revealed that the batch was growing at ten percent per week in aggregate revenue — a pace he described as unprecedented in early-stage venture history. For roughly a quarter of YC startups, ninety-five percent of their code had been written by AI. Some firms were reaching ten million dollars in annual revenue with teams of fewer than ten people. By the Summer 2025 batch, over sixty percent of YC companies explicitly referenced AI in their pitch, and more than half were building agentic solutions — autonomous systems designed to own entire workflows rather than merely assist with fragments of them.

The most extreme illustration of this new reality may be Medvi, a telehealth startup founded in September 2024 by Matthew Gallagher, a forty-one-year-old entrepreneur from Los Angeles. Gallagher launched the company with twenty thousand dollars in capital and more than a dozen AI tools. He used large language models to write the code for his platform, generate website copy, produce images and videos for advertisements, and handle customer service. He outsourced the regulated medical infrastructure — doctors, pharmacies, shipping, compliance — to partner platforms. In its first full year of operation, Medvi generated four hundred and one million dollars in sales and served more than 250,000 customers, all with a workforce of two people: Gallagher and his brother. The company posted a net profit margin of 16.2 percent — nearly three times the margin of Hims & Hers, a competitor with over 2,400 employees. (It should be noted that Medvi has also attracted scrutiny: the FDA issued a warning letter in early 2026 regarding its compounded GLP-1 products, and the company faces allegations of misleading AI-generated advertisements, including deepfake before-and-after images. The story is remarkable, but it is not uncomplicated.)

The Medvi case is striking not because it is typical but because it was not even conceivable three years ago. Sam Altman, the CEO of OpenAI, had predicted that AI would eventually enable a single person to build a billion-dollar company. When the New York Times profiled Medvi in April 2026, Altman said he had won the bet with his tech CEO friends about when such a company would appear.

Duolingo offers a different but equally telling example. The language-learning company launched its chess course in 2025 — a product conceived and built by two employees who had no prior coding experience and no background in competitive chess. Using AI tools to scaffold lesson logic, write content, and prototype the product, they shipped a course that became Duolingo’s fastest-growing offering, surpassing one million daily active users within months. The course was developed not by domain experts wielding traditional tools, but by novices wielding extraordinarily powerful ones.

If AI were merely an ATM — a tool that automated specific tasks while leaving the broader structure of work intact — the market would be pricing it as a productivity enhancer for existing firms. That is not what the market is doing. In early February 2026, the enterprise software sector experienced what traders called the “SaaSpocalypse” — a two-hundred-and-eighty-five-billion-dollar sell-off triggered by investor fears that AI agents could replace entire categories of commercial software. Within forty-eight hours, ServiceNow dropped seven percent, Salesforce fell seven percent, Intuit plunged eleven percent, Thomson Reuters suffered its worst single-day decline on record, and LegalZoom sank nearly twenty percent. By April 2026, ServiceNow’s stock sat forty-seven percent below its July 2025 peak; Intuit had erased four years of gains, trading at roughly half its 2025 high and posting its worst S&P 500 performance of the new year.

The market’s judgment, in other words, is that AI is not complementary to the existing software ecosystem — it is potentially substitutive of it. Investors are not betting that AI will help ServiceNow sell more seats. They are betting that AI agents may eliminate the need for seats in the first place. The parallel to digital banking is hard to miss: just as the iPhone didn’t improve the branch experience but bypassed the branch entirely, agentic AI threatens not to improve existing SaaS workflows but to bypass the SaaS layer altogether.

This reading is, of course, debatable. Research from MIT Sloan finds that AI’s employment effects depend critically on the breadth of task automation within a given role. When AI automates only some tasks, employment often increases, as workers redirect their efforts toward higher-value activities — precisely the ATM dynamic. When AI automates most tasks within a role, however, firm-level employment in that role drops by approximately fourteen percent. The critical variable is not whether AI can perform a task, but whether, once it performs enough tasks, the remaining human role retains sufficient value to justify the position.

The World Economic Forum has projected that AI and related technologies will generate roughly 170 million new jobs globally by 2030 while displacing 92 million — a net gain of 78 million positions. But aggregate numbers conceal enormous distributional pain. The jobs that are created are not the same jobs that are destroyed, and the people who lose positions in one category are rarely the ones who fill openings in another. A displaced paralegal does not effortlessly become a machine-learning engineer. A laid-off customer service representative does not seamlessly transition into an AI product manager. The economic adjustment, even in optimistic scenarios, will be wrenching for millions of workers — and unlike previous technological transitions, it may unfold at a pace that outstrips the capacity of educational institutions and labor markets to adapt.

What distinguishes the current moment from earlier waves of automation is the speed of infrastructure buildout. The digital revolution that eventually displaced bank tellers required decades of sequential development: fiber-optic cables, then semiconductors, then personal computers, then smartphones, then mobile applications. Each layer depended on the one before it, and the full stack took the better part of forty years to assemble. The AI infrastructure, by contrast, is being constructed with trillions of dollars of concurrent investment in a compressed timeframe. The foundational models are already powerful and improving rapidly. The agentic orchestration layer is emerging in real time. The application layer — specific tools and workflows built atop agents — is proliferating faster than anyone predicted.

This acceleration means that the interval between “AI as productivity tool” and “AI as autonomous worker” is likely to be years, not decades. The ATM-to-iPhone transition in banking played out across a generation. The analogous transition in AI may play out across a business cycle. For firms, the implication is that workforce strategies premised on gradual, incremental adaptation — the assumption that employees will have time to learn new skills, that organizational structures will evolve slowly, that the competitive landscape will shift predictably — may badly underestimate the pace of change.

For policymakers, the challenge is equally urgent. The complementary phase of AI — the ATM phase — is the window in which to invest in retraining programs, educational reform, and social safety nets. Once AI shifts decisively from complementary to substitutive — from automating tasks within jobs to eliminating the rationale for those jobs — the political and social costs become far harder to manage. The precedent of bank tellers is instructive. Nobody organized massive retraining programs for displaced tellers because the decline happened gradually, across years, and was absorbed by the broader labor market. But the scale of potential AI-driven displacement is orders of magnitude larger, and the pace is orders of magnitude faster.

The honest answer to the question — is AI the ATM or the iPhone? — is that it is both, simultaneously, in different domains and on different timescales. For some categories of work, AI will function as a powerful complement: it will automate the routine, elevate human judgment, and expand the overall market for skilled labor. For others, it will enable entirely new business architectures that bypass existing workflows altogether — architectures in which a two-person startup generates hundreds of millions in revenue, in which a language-learning app ships a chess course built by non-experts, in which software companies that dominated for decades see their market capitalizations halve in months.

The paradigm shift is not coming. It is here. The ATMs gave bank tellers a generation to adapt. AI may not be so generous with the rest of us.