Why We Can’t Build Data Centers Fast Enough

Sunday Special

55 kilometers away from Washington D.C., in Ashburn, Virginia, lie many large, concrete buildings that look like warehouses, except they are not. These are the data centers powering the cloud businesses like Amazon Web Services (AWS), Google Cloud, and Microsoft Azure that power the generative-AI models like ChatGPT.

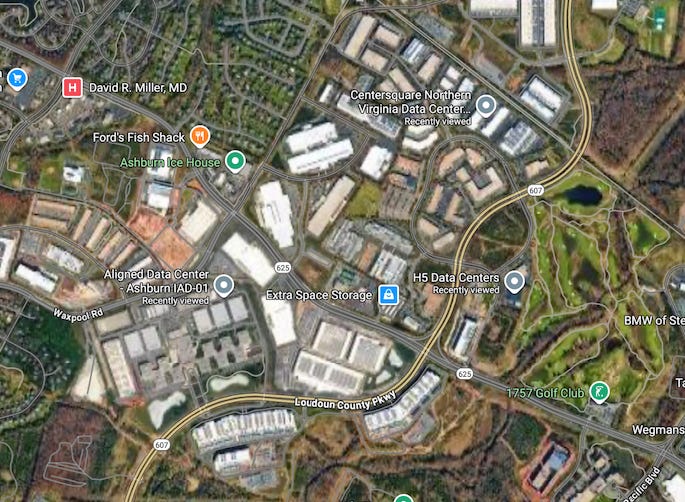

Satellite images show a cluster of data centers, including the Aligned Data Center and Center Square Northern Virginia Data Center. Many of these data centers are not new. They are the byproduct of the rise of cloud computing, a shift in how computer systems are run. Instead of running programs and storing data on personal computers, companies and individuals can rent out computing power from cloud providers.

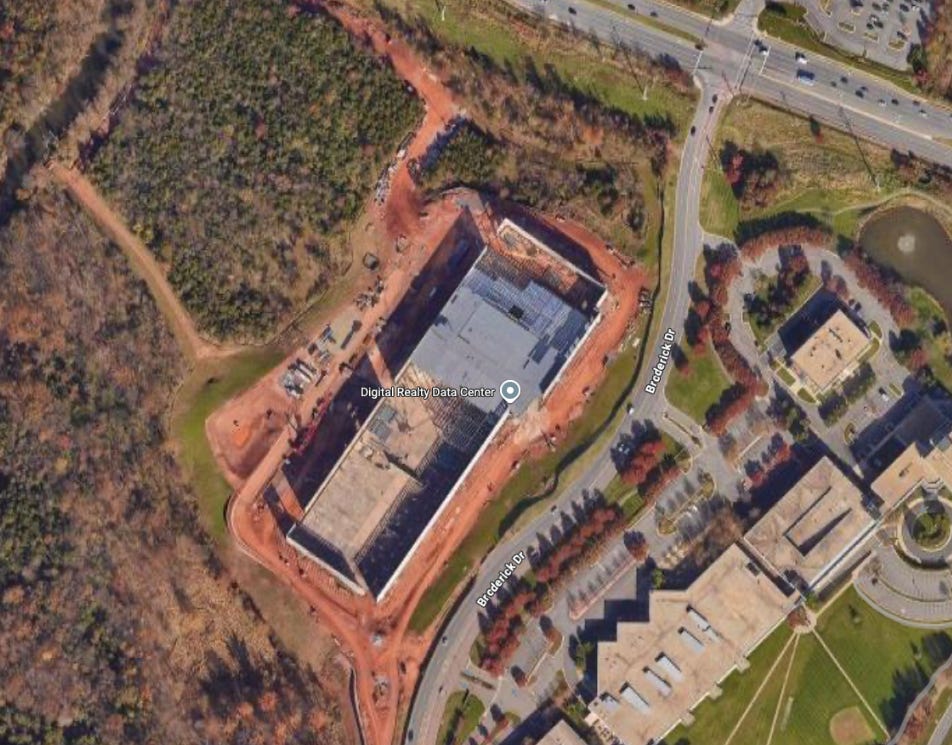

But beside these data centers are countless other centers that are currently under construction, like this Digital Realty data center. The buildout for data centers has experienced consistent growth over the last decade, which raises the question: What is causing the current boom in the buildout of data centers?

Data center buildout first started when Amazon pioneered the service beginning in 2006 with AWS to handle the massive amount of data and computing from its ecommerce operation. The company soon realized that other companies transitioning to the internet had similar needs, and began providing cloud computing services. By renting out compute and storage from AWS, companies like YouTube, Spotify, and Netflix made streaming of videos and music to billions of audiences possible, displacing DVDs and CDs.

Since its launch, AWS has grown to be instrumental to the internet. In the most recent Amazon outage in October 2025 lasting over 15 hours, hundreds of major companies were affected, including McDonald’s, Zoom, and Robinhood. Within the company, AWS has become more vital to Amazon’s core business compared to its ecommerce business. In 2024, AWS generated $108 billion in revenue, roughly 17% of Amazon’s total sales but a staggering 58% of its operating profit.

For years, the cloud business experienced a rapid growth rate in the high teens, but has spiked since the launch of ChatGPT in November 2022. ChatGPT reached 100 million users in 2 months, making it the fastest-growing consumer application in history. For reference, that is equivalent to one-third of the American population in 2 months.

Most people underestimate the immense computational power needed to run these AI systems; entire nuclear power plants are being brought back to life by Microsoft to power data centers that run the Graphics Processing Units (GPUs) to train and run these AI models.

AI models are energy-intensive primarily because of the initial training stage, where the system analyzes and learns from massive datasets, including pictures, audio, and text — essentially years of schooling compressed into weeks. To train a model like GPT-4 reportedly requires tens of thousands of Nvidia GPUs running for months, consuming electricity equivalent to powering several thousand homes for a year.

Next is inference. Inference is the work of responding to user queries. Whether you are asking ChatGPT which dress to buy or generating an image with Gemini’s Nano Banana, you are triggering inference, and it requires serious computing power. The energy gap between a text response and a short video can be massive, reaching a staggering 900 times difference. That scale is roughly the visual difference between the tiny surface area of a single coffee bean and the total surface area of four standard sheets of paper.

The unprecedented and escalating advancement of AI dramatically increases the demand for both training and inference compute, which in turn necessitates the deployment of more physical compute infrastructure, ultimately driving a significant rise in data center buildout. Newer reasoning models, such as DeepSeek R1 and Google’s Gemini 2.5, think through problems step-by-step. This layered “thinking” dramatically increases computational needs, with a single complex reasoning query consuming the compute of 10 standard queries.

Accelerating the demand for compute is the rapid adoption of AI. AI has gone from a niche curiosity to foundational infrastructure. It’s integrated into tools like Cursor for generating code, embedded into Google Search to handle millions of daily queries with AI Overview, and available to 400 million users through Microsoft’s Copilot in Office 365. This massive, enterprise-wide scale, achieved in the span of three years, is the primary driver adding enormous pressure to existing compute resources.

The fundamental issue is timing. While companies like Canva can introduce Canva AI and integrate it within their existing suite of products within the span of months, the physical infrastructure required to run it takes years to build. Building a modern hyperscale data center, which houses the servers that run AI, requires a construction period of two to four years. This inherent delay creates a massive supply-demand imbalance that has, in turn, sparked the largest infrastructure buildout in a generation.

The resulting surge in demand has sharply accelerated data center construction spending over the last two years. The established hyperscalers—Amazon, Google, Microsoft, Meta, and Oracle—are driving this capital expenditure, with commitments totaling over $300 billion for data center buildouts in 2025. To put this in perspective, that single-year spend is greater than Greece’s entire projected GDP for 2024. Simultaneously, a new wave of competitors, the “neoclouds” like CoreWeave, are capitalizing on the boom. They are converting existing infrastructure, such as former crypto mining facilities, and taking on substantial leverage to rapidly deploy the hyperscale data centers needed to support the AI revolution.

The resulting construction boom is rapidly transforming the entire U.S. economy. In a major shift in early 2025, data center capital expenditure equaled the growth rate of consumer spending, making it a key engine of GDP expansion. Simultaneously, the energy required for AI is skyrocketing, with power demand from data centers expected to almost triple by 2030. The challenge is so immense that Apollo Global Asset Management has warned the resulting AI energy gap “will not be closed in our lifetime.”

While there are many debates about whether the current data center build out can lead to overcapacity in the future, a sign of a bubble, what is clear is that every indicator suggests this boom will continue. Meta, for instance, recently revised its total data center spending upward in its Q3 2025 earnings report, increasing its bottom-line commitment from $66 billion to $70 billion.

The data center expansion is one of the largest investment cycles in modern history. Driven by the belief that AI will fundamentally transform work, creation, and every aspect of life, these facilities are designed to shape the technological landscape for the next two to three decades. We are, in effect, observing the construction of the core infrastructure for the next era of human civilization.